Real-time AI video processing. Open source.

Process live video streams with AI models in real-time. WebRTC pipeline with 200-400ms latency for live video effects, face detection, and custom ML models.

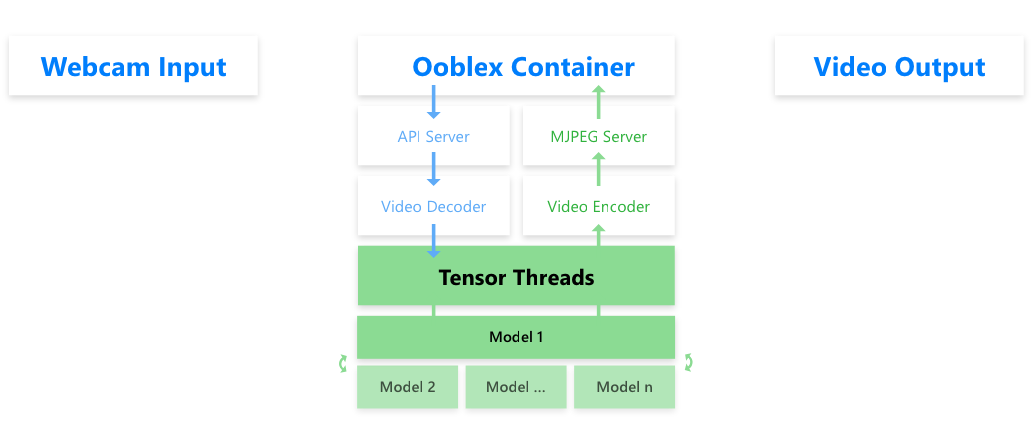

Quick Start View on GitHubSimple architecture: WebRTC + Redis + RabbitMQ